Digital Transformation

The DeepSeek Revolution: Why Open-Weights and Low-Cost Reasoning Models are Winning.

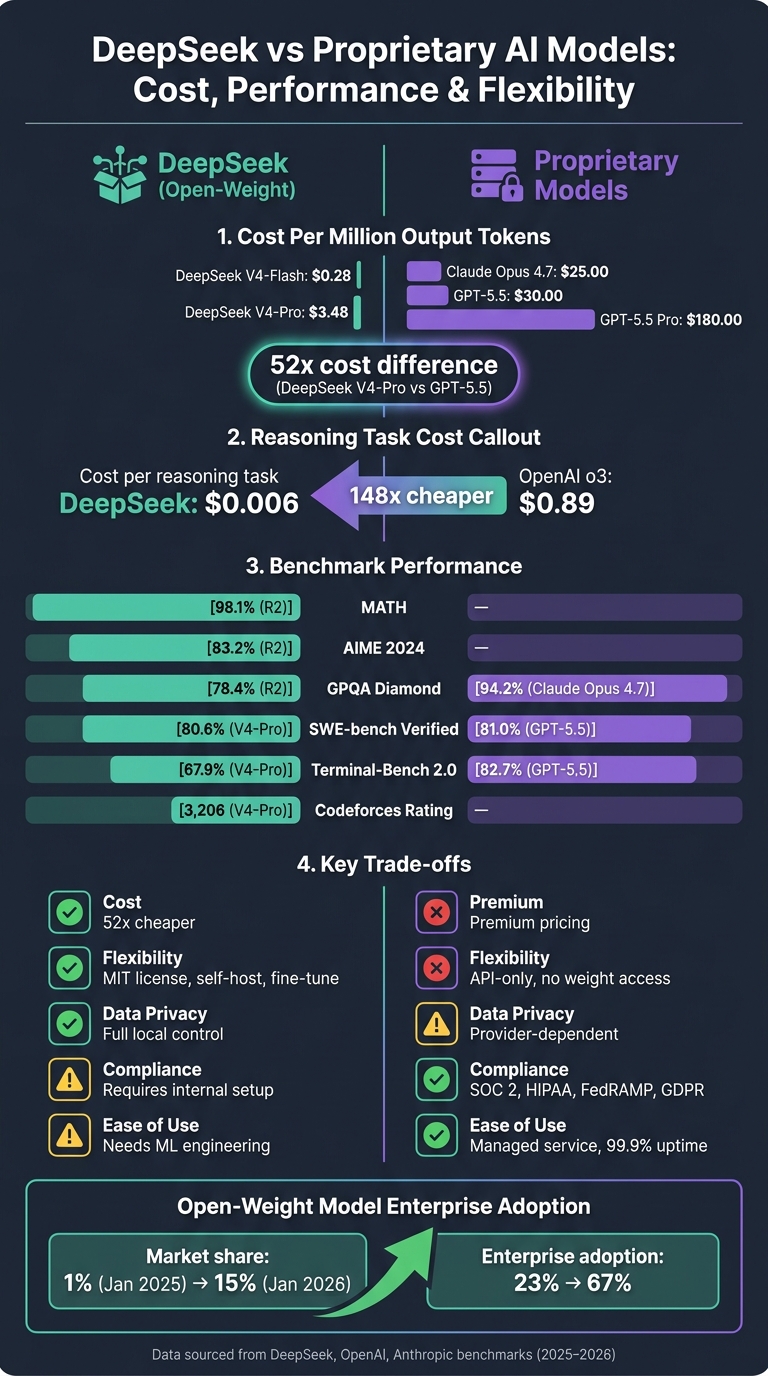

Open-weight AI models are redefining enterprise AI by delivering near-state-of-the-art reasoning at a fraction of proprietary costs.

The DeepSeek Revolution: Why Open-Weights and Low-Cost Reasoning Models are Winning.

Open-weight AI models are reshaping the AI landscape. They offer businesses a cost-effective alternative to proprietary systems, without sacrificing performance. Here's the key takeaway: models like DeepSeek are proving you don’t need to spend $100M+ to achieve cutting-edge results.

- Cost Advantage: DeepSeek's reasoning tasks cost just $0.006 each, compared to $0.89 for OpenAI’s o3 model - a 148x difference.

- Performance: DeepSeek V4-Pro delivers high scores on reasoning benchmarks like MATH (98.1%) and competitive coding, rivaling top-tier proprietary models in many tasks.

- Flexibility: With an MIT license, DeepSeek allows self-hosting and fine-tuning, offering full control over deployment.

- Proprietary Models: While still leading in areas like factual accuracy and compliance, their significantly higher costs (e.g., GPT-5.5 at $35 per million tokens) are becoming a barrier for many enterprises.

The choice comes down to your priorities: cost savings and control with open-weight models like DeepSeek, or reliability and managed services from proprietary systems.

DeepSeek vs Proprietary AI Models: Cost, Performance & Flexibility Compared

DeepSeek AI vs OpenAI Cost/Benefit Analysis (Feb 2025)

sbb-itb-212c9ea

1. DeepSeek

DeepSeek is setting the pace for the open-weight AI movement, showcasing how accessibility and performance can go hand in hand.

Performance on Reasoning Benchmarks

DeepSeek V4-Pro, launched in April/May 2026, is a powerhouse with 1.6 trillion parameters. What’s striking is its efficiency: it activates only 49 billion parameters per token, delivering extensive knowledge while keeping inference costs low.

"DeepSeek-V4-Pro activates about 3% of its parameters per token. The model knows what a 1.6 trillion-parameter system knows; the inference cost is closer to a 49B model." - Dr. Kaoutar El Maghraoui, Research Scientist

In competitive programming, V4-Pro excels with a Codeforces rating of 3,206 and a 93.5% score on LiveCodeBench. Its reasoning capabilities are bolstered by DeepSeek-R2, which achieves 98.1% on MATH and 83.2% on AIME 2024. While it shines in complex reasoning, it still slightly lags behind some closed models in deep factual knowledge and handling the trickiest cross-domain challenges.

These numbers highlight the model's ability to deliver high-level performance while maintaining cost efficiency.

Cost (Inference and Deployment)

DeepSeek V4-Pro stands out for its affordability. Its API cost is $5.22 per million tokens, significantly undercutting proprietary models. For high-volume users, the cost drops further to $3.48 per million tokens. Plus, with context caching, repeated inputs like conversation history are cached at just $0.014 per million tokens, potentially slashing input costs by up to 90%.

| Model | Input (per 1M tokens) | Output (per 1M tokens) | Total Cost |

|---|---|---|---|

| DeepSeek V4-Flash | $0.14 | $0.28 | $0.42 |

| DeepSeek V4-Pro | $1.74 | $3.48 | $5.22 |

| Claude Opus 4.7 | $5.00 | $25.00 | $30.00 |

| GPT-5.5 | $5.00 | $30.00 | $35.00 |

Deployment Flexibility

DeepSeek V4 comes with an MIT license, empowering organizations to download, self-host, and fine-tune the model on their own infrastructure without legal hurdles or vendor lock-in. Deployment options range from a Mac Studio M4 Max with 128GB unified memory for smaller setups to 8× NVIDIA H100 GPUs for running the full V4-Pro model. For teams processing over 15–40 million tokens monthly, self-hosting can match or even undercut API costs. At scales of 100 billion tokens, the savings become substantial.

A noteworthy example: Aurora Mobile (NASDAQ: JG) integrated DeepSeek V4 into its GPTBots.ai platform on the same day the model launched, making it the first US-listed company to achieve same-day deployment of V4. This flexibility underscores the model's potential to bring cost-effective AI to a wider audience.

Ecosystem and Tooling

DeepSeek is designed for easy integration, supporting standard AI frameworks and tools. Its API is OpenAI-compatible, so switching typically involves just updating the base_url and api_key. It works with popular Python tools like Hugging Face, vLLM, and PyTorch, and supports local inference tools such as Ollama, LMStudio, and SGLang. The V4 model is also natively multimodal, capable of processing text, images, and video in a single pass, which is a game-changer for document-heavy workflows.

However, DeepSeek still has room to grow in terms of first-party tools. Unlike some closed models with extensive ecosystems, it currently lacks an integrated suite of products. Still, its compatibility and flexibility make it a strong contender in the AI landscape.

2. Proprietary Frontier Models

While open-weight models have made strides in cost efficiency, proprietary models still hold an edge in controlled settings. Models like GPT-5.5 and Claude Opus 4.7 excel in specific tasks, although the gap in cost between them and open-weight alternatives continues to grow.

Performance on Reasoning Benchmarks

Proprietary models often outperform on reasoning benchmarks. For instance, Claude Opus 4.7 achieves a score of 94.2% on the GPQA Diamond test (focused on graduate-level science questions), significantly surpassing DeepSeek R2's 78.4%. Similarly, GPT-5.5 leads in agentic tool use on Terminal-Bench 2.0 with a score of 82.7%, compared to DeepSeek V4-Pro's 67.9%.

However, the competition tightens when it comes to real-world tasks. On SWE-bench Verified, a test for software engineering scenarios, GPT-5.5 scores 81.0%, with Claude Opus 4.7 and DeepSeek V4-Pro close behind at 80.8% and 80.6%, respectively - just a fraction of a percentage point apart.

"We have hit an inflection point where the best open-source models are now within shouting distance of the best proprietary ones - not just on benchmarks, but on the real-world tasks developers care about." - Simon Willison, Developer and AI Tools Researcher

Next, let’s dive into how these proprietary models compare in terms of cost efficiency.

Cost (Inference and Deployment)

Cost remains a significant hurdle for proprietary models. GPT-5.5 Pro, for example, costs $180.00 per million output tokens, which is about 52 times more expensive than DeepSeek V4-Pro at $3.48. Even the standard GPT-5.5 tier is nearly 9 times pricier at $30.00 per million output tokens.

| Model | Input (per 1M tokens) | Output (per 1M tokens) |

|---|---|---|

| GPT-5.5 Pro | $30.00 | $180.00 |

| GPT-5.5 | $5.00 | $30.00 |

| Claude Opus 4.7 | $5.00 | $25.00 |

| Gemini 3.1 Pro | $2.00 | $12.00 |

OpenAI’s pricing structure also includes charges for internal reasoning tokens, which can add anywhere from 2,000 to 8,000 hidden tokens per complex problem. This growing price gap has become a critical factor for many businesses when deciding which model to use.

"The pricing delta is now the dominant decision factor for most production use cases." - Simon Willison, Developer and AI Tools Researcher

Deployment Flexibility

Proprietary models are exclusively available via API, meaning your data must leave your infrastructure with every request. This limitation can be a dealbreaker for industries like healthcare, finance, or defense, where strict regulations apply. Self-hosting is not an option, nor is modifying the model's underlying weights.

On the flip side, the API-only approach eliminates operational challenges. Organizations don’t need to manage GPU clusters or hire specialized engineers. Additionally, proprietary providers offer perks like 99.9% uptime guarantees and dedicated enterprise support.

Beyond deployment constraints, the ecosystem surrounding proprietary models offers further differentiation.

Ecosystem and Tooling

While open-weight models focus on flexibility, proprietary systems stand out through their compliance features and enterprise-grade support. Tools like OpenAI's Responses API and Anthropic's tool-use schema are well-documented and proven in production. These models also come with built-in compliance for standards like SOC 2, HIPAA, FedRAMP, and GDPR, ensuring secure handling of sensitive workloads.

Anthropic’s enterprise performance highlights this advantage, with annual recurring revenue surging from $9 billion to $30 billion by early 2026. For many organizations, the managed-service model - offering predictable behavior, safety assurances, and dedicated support - makes the higher cost worthwhile.

"The cost premium is effectively a managed service fee." - Particula Tech

Pros and Cons

This section highlights the main advantages and trade-offs of each approach. While DeepSeek shines in cost efficiency, it comes with challenges in reliability, compliance, and operational complexity. On the other hand, proprietary models, though more expensive, simplify deployment for teams without dedicated machine learning infrastructure.

Here’s a breakdown of how the two approaches compare:

| Category | DeepSeek (Open-Weight) | Proprietary Models |

|---|---|---|

| Cost | Significantly cheaper - around 52x less per reasoning task | Higher costs due to premium pricing |

| Performance | Excels in math, coding, and long-context tasks | Strong in general knowledge, creative writing, and reliability in one-shot tasks |

| Flexibility | Highly flexible with MIT licensing for fine-tuning and self-hosting | Limited flexibility; API-only access without weight exposure or self-hosting |

| Ecosystem | Growing, but requires more internal engineering resources | Established ecosystem with robust SDKs, reliable APIs, and compliance certifications like SOC 2 and HIPAA |

| Data Privacy | Full control through local deployment; potential risks with hosted APIs in certain regions | Relies on provider privacy policies and compliance with Western regulations |

The table above highlights the key trade-offs. Below, we explore the operational and regulatory challenges in more detail.

To self-host DeepSeek V4-Pro, you’ll need a multi-GPU cluster (typically 4–8 NVIDIA H100s) and engineering support equivalent to 0.25–0.5 full-time employees per model version. For smaller teams, these requirements could negate the savings from lower token costs.

Another consideration is DeepSeek's censorship measures, which may filter or sanitize outputs on certain topics. While this won’t impact most business use cases, it’s a good idea to test the model with your specific needs before fully committing.

"DeepSeek isn't just a cheaper alternative. It's a strategic choice that gives you control over your AI infrastructure." - CowrieDev, BSWEN

Conclusion

Open-weight models like DeepSeek V4-Pro have evolved far beyond being mere budget-friendly options; they’ve become key players in the AI landscape. From January 2025 to January 2026, these models saw their market share jump from 1% to 15%, while enterprise adoption surged from 23% to 67%. This momentum is undeniable.

These models bring clear advantages in cost and performance, but proprietary models still shine in areas like factual accuracy and reliability. The reality is that no single solution fits every need. For instance, DeepSeek V4-Pro excels in coding tools for developers and offers a much lower cost per output token. On the other hand, proprietary models are better suited for tasks requiring high reliability without human intervention, particularly in complex agent-driven scenarios. This trade-off - DeepSeek’s affordability and adaptability versus the dependable, managed performance of proprietary models - has been at the heart of this discussion.

FAQs

When does self-hosting an open-weight model become cheaper than using an API?

Self-hosting an open-weight model starts to make financial sense when your monthly token usage goes beyond 1.2 billion tokens for chat or 600 million tokens for code completion. These figures take into account not just the operational costs but also the expense of engineering time. For businesses handling large-scale workloads, this can be a more economical alternative to relying on an API.

What hardware do I need to run a model like DeepSeek V4-Pro in production?

To get DeepSeek V4-Pro running smoothly in a production environment, you'll need some serious GPU power. The recommended setup includes 16 NVIDIA H100 80GB GPUs or comparable options with large VRAM capacity. If you're working on a smaller scale, you can opt for fewer GPUs, such as the RTX 5090 or RTX 4090, combined with CPU offloading and at least 64 GB of RAM.

On top of that, make sure you have a powerful multi-core CPU, fast NVMe SSD storage, and enough VRAM and system RAM to keep things running efficiently. These components are crucial for handling the demands of DeepSeek V4-Pro.

How do I choose between an open-weight model and a proprietary model for my use case?

When deciding between open-weight models and proprietary models, it’s important to align your choice with your priorities.

- Open-weight models, such as DeepSeek, are known for their high performance at a lower cost. They also offer flexibility for customization and eliminate ongoing API fees, making them a great choice for those focused on cost-efficiency and adaptability.

- On the other hand, proprietary models often shine in specialized tasks. They come with dedicated support and optimized infrastructure, which can be particularly appealing for enterprise-level needs.

Ultimately, consider factors like cost, performance, customization options, and support availability to determine which approach fits your goals best.